Voxelscape A GPU-based ray caster for semi-voxel terrains like in the old Novalogic titles (eg Delta Force or Comance).

Development Iteration

-

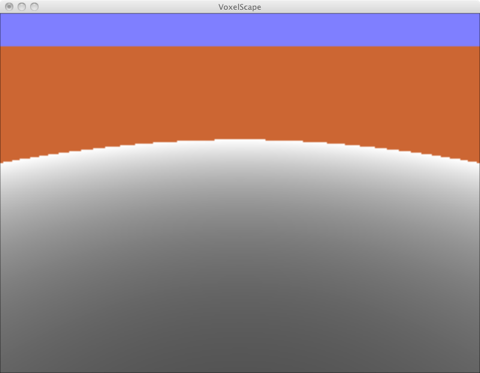

Ray casting

The first output image. The shader stops at a predetermined number of iterations. This shader colours the iteration count from black (0 iterations) to white (reached iteration maximum). As I am generating normalised rays from the near plane, the far ‘horizon’ is curved around the camera. Sampling is done into a 320x240 (VGA :)) FBO, which is upscaled to the correct resolution during display. -

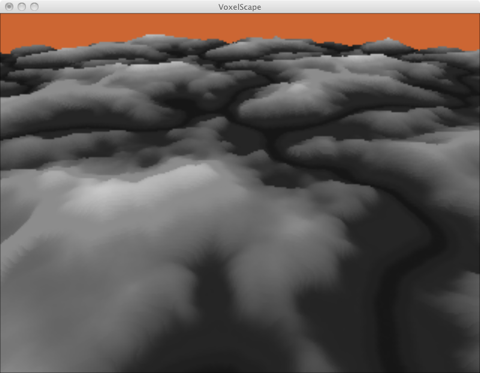

Height Mapping

Loaded a texture. Each iteration along the view ray, the height map is tested if the ray is already below it. It it is, we stop and determine the pixel colour. In this case black for zero height to white for maximum height. This translates pretty neatly into normalised texture access, as the stored value is between 0.0 and 1.0 as well. -

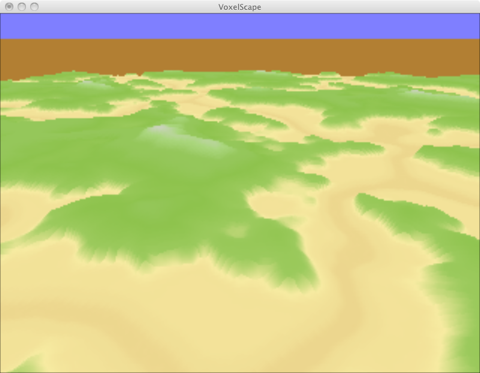

Coloring

A one-dimensional colour-texture is used to determined the pixel colour. The height is used to access the correct colour. Because the terrain is only stored as a texture (the only existing ‘geometry’ is the near plane), it automatically wraps and repeats -- and is therefore endless -- if the correct texture filters are chosen. -

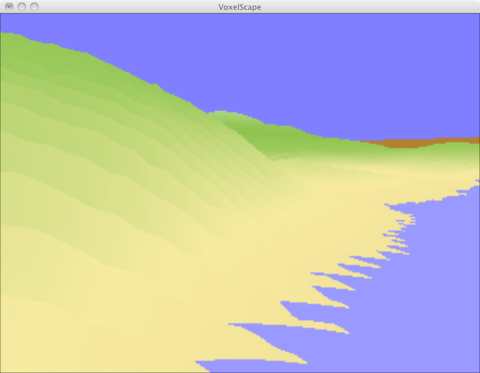

Sampling Artefacts

A close-up of a ‘coast-line’. A waterline height is declared, and once the iteration falls below that, we stop and colour the pixel with a pre-determined water colour. The coastline shows pretty bad sampling artefacts. Right now the first value below the height-field is taken as the result, where a better solution would be to calculate an approximate intersection between the ray and the height-field ‘function’ between this and the previous iteration. Increasing the iteration count helps to a degree. All the sampling artefacts are again curved and almost parallel to the near plane, whereas the artefacts in relief mapping are parallel to the U/V plane. -

Noise and Fog

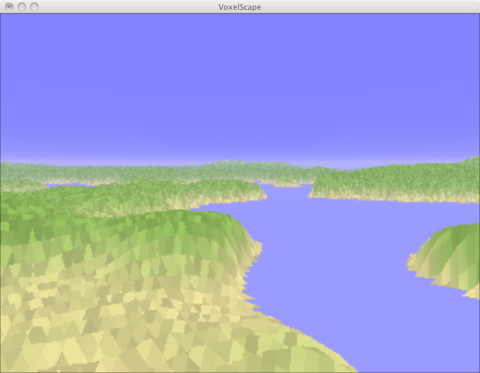

Added a noise texture to make the terrain more interesting. Once the algorithm found a hit or hit the max iteration count, a fog coordinate can be calculated and the final colour interpolated with the fog colour. -

Reflections

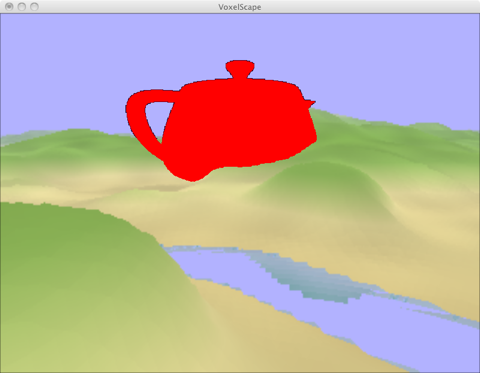

Now this looks a bit better :) The raycaster interpolates the last two found intersections to get the current/correct height. This gives smooth surfaces. The final colour is modulated with a stretched noise texture to break up the uniformity of the terrain. Reflections are done by reflecting the ray on the water surface which is at a fixed height. Finally, the forward-rendered object is composited into the image (see below).

Algorithm

Notes on gl_FragDepth in a ray caster

To combine multiple buffers with their depth textures, the depth values have to be in the same range and with the same scaling. Multiple buffers are used and most have the standard OpenGL depth range/test. Calculating the ‘correct’ and OpenGL compatible z-buffer value in the raycaster follows this formula (which can be extracted when multiplying the z coordinate with the modelview projection transform):

float r; // distance along ray -- the same as the vertex - eye distance

float zf; // farplane distance

float zn; // nearplane distance

gl_FragCoord = zf / (zf - zn) * (1.0 - zn / r);

Depth Textures in FBOs

FBOs can bind render buffers or bindable textures at the depth buffer attachment point. Depth textures provide readback facilities for example for shadow mapping or general depth compares.

To speed up many FBO rendering operations with fullscreen quads, I usually don’t clear the buffer specifically, but draw a screen-space fullscreen quad, with glDepthTest disabled. This work almost always, because the content of the screen gets overwritten by the new texture anyway -- in this case neither color nor depth buffer need to be cleared. However, this fails if depth textures are needed. It seems that if glDepthTest en/disables the complete depth compare and writing stage. So with a disabled depth test, no values are written to the texture! Clearing the color and depth buffer and enabling depth test fixed this problem.